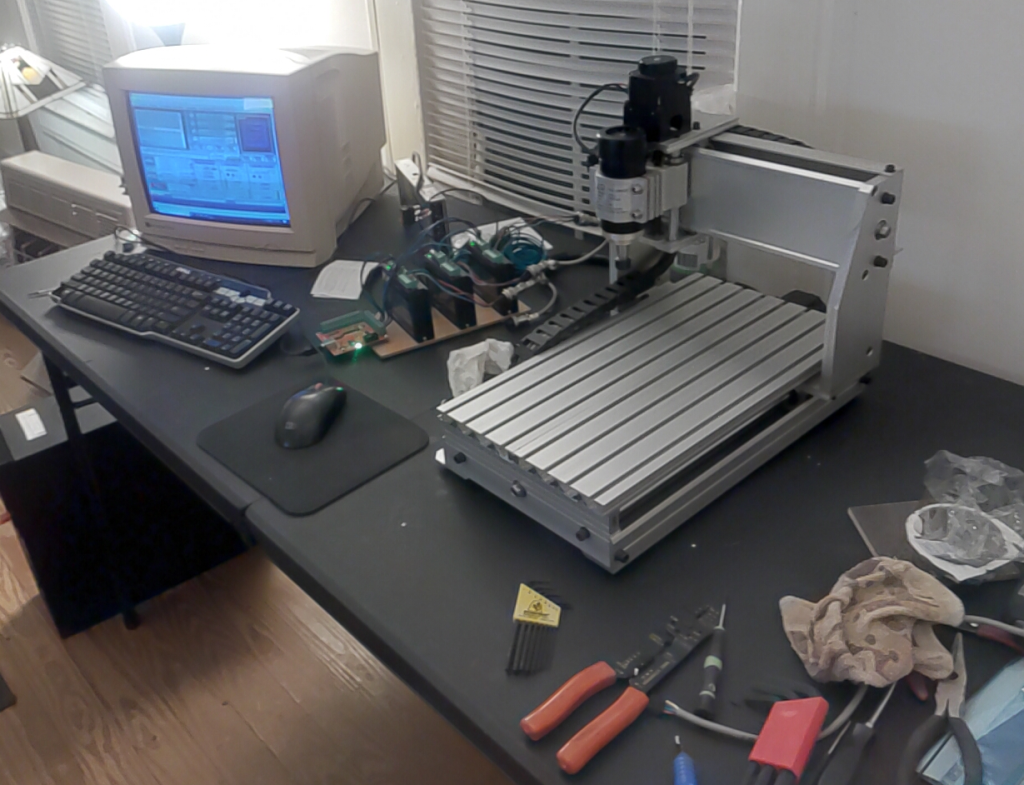

Years ago I bought a little 3040 CNC. It was disappointing. It really is cool for what it is however, it did work, just not well. There really isn’t anything wrong with the machine itself the electronics however are not so great. Years later I bought a 6040 CNC and while it works better, is a much nicer machine it suffers the same ailments as the 3040. I decided at some point that using these machines for the basis of a small business was a good idea. The all in price for a 6040 is affordable and the machines themselves are pretty solid.

Delving into the idea proves to be a rabbit hole. This is where AI came in. I am not naming the AI it is however impressive and is in my opinion the best one. I started asking it questions it gave me some useful information. Then after digging into the topic independently had more insight for more questions. Rinse repeat until the goal in mind was clear. This would be a good time to point out the AI is lousy at giving opinions and suggestions. Just don’t do it it will lead to expensive mistakes.

My goal was/is to upgrade these stock cheap widely available CNCs to consistent, reliable, and accurate machines for production use. Having come from a solid background of programming, designing control systems, and using CNCs help me to understand what I am seeing and experiencing so not being dependent only on the AI has allowed me insight into the strengths and the flaws in AI.

AI’s are cool let me state that. I am not knocking them. There are different kinds and that is beyond the scope here. I am referring now to what is called a Large Language Model (LLM) running on what is called an inference engine. It did not take long to realize if you are going to use and depend on this charming seemingly wicked smart algorithm to make real decisions in the real world it would be helpful to understand what you are dealing with from a computer science perspective. The illusion is intoxicating but sober analysis of what you are dealing with reveals something deeply flawed in some ways and incredibly helpful and useful in many ways.

A LLM is a basically a bidirectional sorting engine where all the inputs are all connected to all the other elements in layers that sift through what they refer to as layers going back and forth through them until it finds the closest match and then is returns the result. Yes, its and over simplification but it is basically how it works. Training data is converted to what they call tokens. It takes months on crazy powerful computers to train one and is extremely processor intensive. Just running a small one with 8 billion parameters requires Gigs of ram and massive numbers of processes to even begin to seem interactive. I experimented with one on a Linux box and was adventure in and of itself. It is sitting here next to me on a dedicated box with some pretty decent specs. It is simplistic yet, it also a marvel. During that learning curve I came to understand the way to make an AI yours is through what they call fine tuning. Sounds simple enough doesn’t it? It isn’t and its on my list of things to understand and develop once I get my CNCs behaving.

The training aspect is pretty much out of the average user’s reach. There are several LLMs available for download and running on your own gear. The idea being you have your own sandbox to play in. The caveat being its is a generalized very smart and resourceful chat bot. If you want to get the thing to be useful for what interests you it has to be fine tuned. This is still beyond my skill set it is definitely on the list of things to do. Pointing this out redundantly is for emphasis. There is a lot of self validating incorrect data on the internet I used to call it sporge Oxford dictionary added a word this year called slop its works too. This slop is used to train AIs and them being more like a parrot with a method of filtering called weighting can produce some rather erroneous replies. Remember its just an inference machine it doesn’t think.

Now on to my main point. In the process of upgrading and configuring this CNC upgrade I was provided with some very insightful information that prevented some potentially confusing and infuriating mistakes. It also relied heavily on data provided by people who don’t really understand the topic. So yesterday I smoked my X axis stepper. It was running really well and is nothing like my previous experience with it. The fact of the matter is its awesome. That is until it started screaming in pain and smoked the motor. So back to the AI I go and its trying to convince me that I need to attach heat sinks and fans to the motors. This is a ridiculous solution. The silly AI even showed me pictures of rigs with heat sinks and fans on them. When I pointed out how utterly stupid this was it dug in and started to argue with me. This is not the first time its done it either. It tends to what they call hallucinate and if you going to use AI as an assist be very aware of this phenomenon. For the past two hours the machine has been happily running the test program that burned my X axis motor yesterday. The real solution was to dial down the current and slow down the rapid speed and acceleration. Now I could go on and on about the “hobbyist” community their insights, perceptions, and goals and why this happened because the AI was getting its weighted data from a bunch of over eager quislings. That is not my purpose. The goal as stated earlier is to turn a good machine with lousy electronics into something consistent, reliable, and accurate. I am well on my way to getting there. AI helped a whole lot and saved me months if not years of experimentation and lots of cash too. However, be warned.